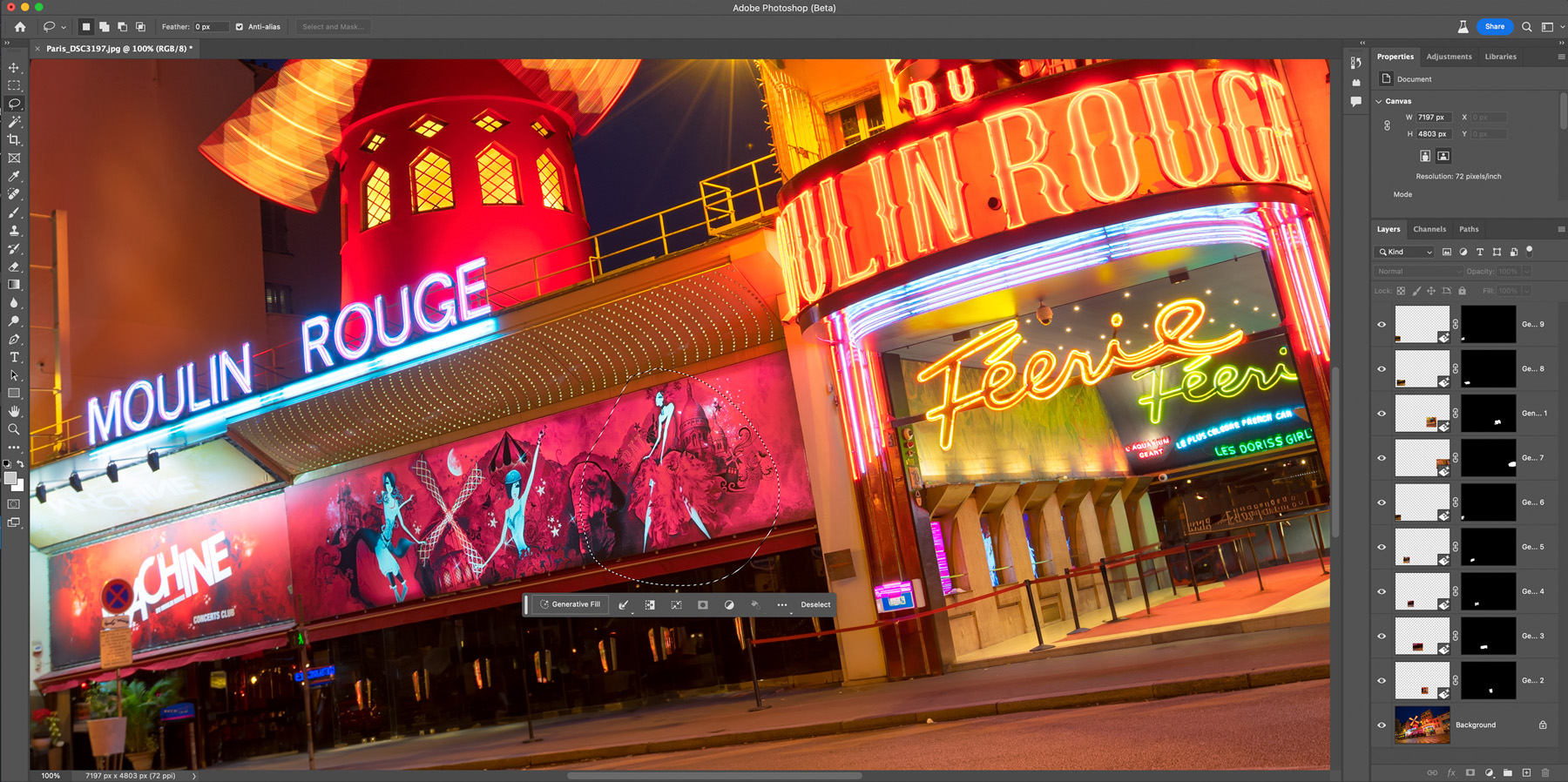

Photoshop Beta with Generative Fill

Recently Photoshop released a new Beta version, that now also includes Generative Fill. This is an evolution of the content-aware tool that was there for a long time, but this time it uses AI image generation to create the fill.

I have been playing with it since it was released and today will take a look at the most prominent uses, for me as a photographer. So it will be the removal of objects, canvas expansion, and object generation.

Using Generative Fill is easy. You just select a part of your image and a small popup shows up. You select Generative Fill, and in the new popup, if you want, you can add keywords. But it can be also left bland. Once you confirm by selecting Generate, Photoshop will create three versions for you. You can then view them, or regenerate the result. Then, you can another part of the image, even the just generated parts, and continue.

Removal of objects

This one is the most interesting part for me, as a photographer. It is not that easy to get rid of things in a photo, and one has to use many different techniques. This makes things much easier. The only issue is the quality of the result. While it looks good, the resolution is just lower and there is a loss of detail. If you compare it to a traditional way, of creating multiple exposures and then blending them (as shown here). But that technique is not always possible, or you just do have time to wait.

I can also imagine this helping a lot with problems like burnout highlights and lens flares. When you miss this when taking the photo, it’s hard to fix.

Here I edited one of my photos from Paris, where I removed all the people waiting in front of the Moulin Rouge. What do you think?

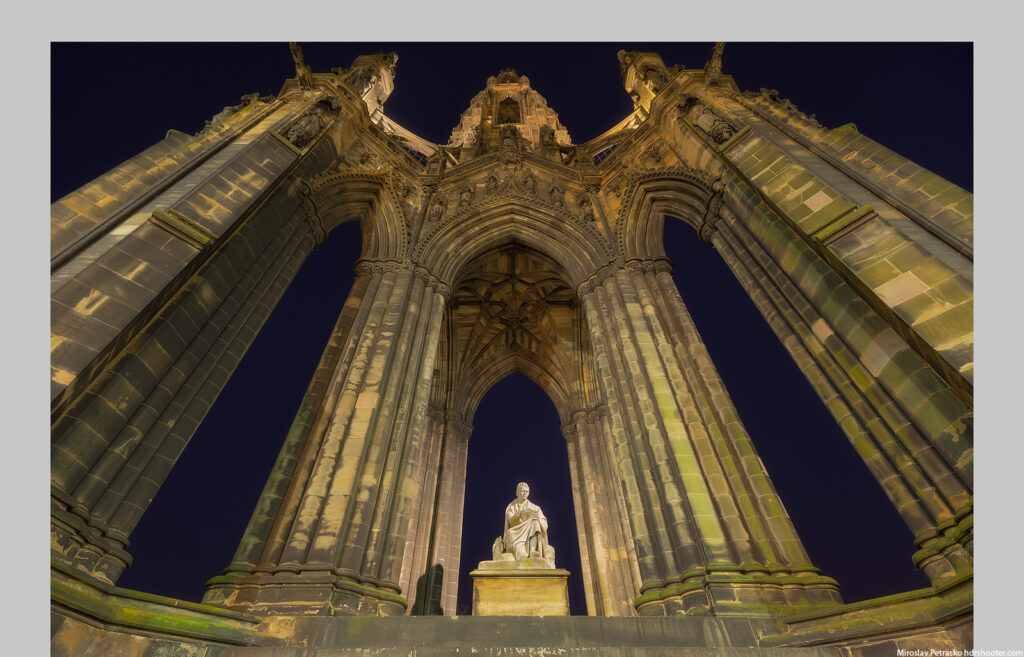

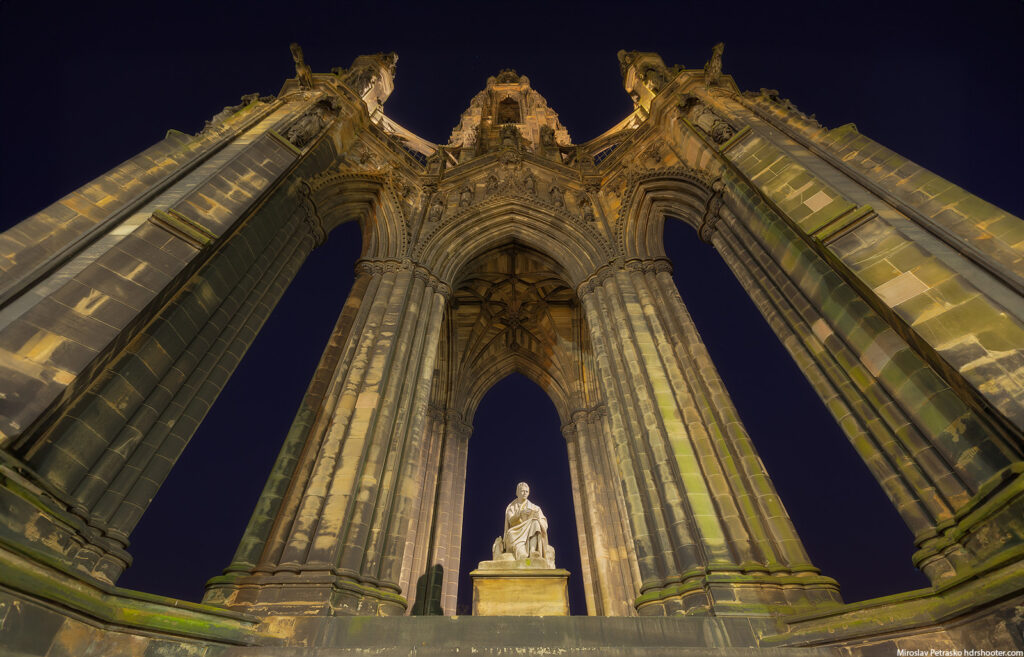

Expanding the canvas with Generative Fill

Another use I can see is expanding the canvas. There could have been many reasons why you could not capture all that you wanted. Something in the way, the lens not wide enough, people standing around, small space to shoot from, or something else. Photoshop Betta using Generative Fill will give you multiple options and the results are quite impressive. From what I saw, having a transparent background sometimes gives better results. Also expanding already existing photos gives better results than trying to add new elements to photos. It just looks more natural.

Here first is an example of the Scott monument in Edinburgh, where I could not move further away from it, due to a road. Generative Fill nicely expanded the photo, giving the monument more space in the shot.

Or a bit different example with a photo from the 5 Fingers in the Austrian Alps, where using the Generative fill, I change a simple photo into a panorama. But yeah, the loss of quality is visible. I included two different versions here, but you can regenerate the result as many times as you want.

Generating images

The last option where you can use Generative Fill is to completely create new elements for empty or existing images. As mentioned you can continue selecting part of the image and so adding more elements to it.

Here, I took the initial image from Santa Magdalena in Italy, and at first I selected the bottom part, and used Generative Fill with the keywords “pond reflection” to create the bottom part. Then I selected a few areas around the newly generated parts and with the keyword “rocks” added a few rocks to make it more interesting.

I did try to add a few other things, like horses or a bench, but the results were quite bad. It just did not blend into the image at all.

Overall, the results are impressive, especially with removing of objects and expanding the canvas. If you just want to add something to a photo, the results are a bit of a hit-and-miss, and you will have to try multiple times to have a usable image. But this is still in beta and with the progress of AI image generation in recent months, I think this will get better really soon.

Have you tried Generative Fill in Photoshop Beta? What do you think of it?